Anthropic, an artificial intelligence (AI) company, has started to implement Claude Code with a new security feature that can find vulnerabilities in a user's software codebase and recommend fixes. Enterprise and Team customers can now access the capability, known as Claude Code Security, in a restricted research preview. According to a Friday announcement from the company, "It scans codebases for security vulnerabilities and suggests targeted software patches for human review, allowing teams to find and fix security issues that traditional methods often miss."

The goal of the feature, according to Anthropic, is to use AI as a tool to assist in identifying and fixing vulnerabilities to counterattacks in which threat actors use the same tools to automate vulnerability discovery.

The tech startup said that as AI agents become more adept at identifying security flaws that humans would otherwise miss, adversaries may be able to use those same skills to find exploitable vulnerabilities faster than in the past. Additionally, it stated that Claude Code Security is made to improve the security baseline and provide defenders with an edge against this type of AI-enabled attack. Claude Code Security, according to Anthropic, goes beyond static analysis and pattern scanning by reasoning through the codebase like a human security researcher, comprehending how different components interact, tracking data flows throughout the application, and identifying vulnerabilities that rule-based tools might overlook.

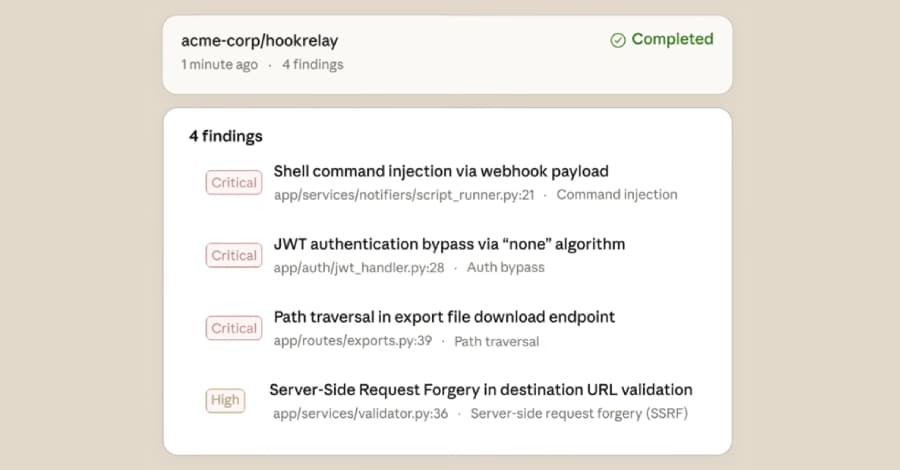

Every vulnerability that has been found is subsequently put through what is described as a "multi-stage verification process," in which the outcomes are reexamined in order to eliminate false positives. To assist teams in concentrating on the most critical vulnerabilities, a severity rating is also given to each one. The Claude Code Security dashboard, where teams can examine the code and the recommended patches and approve them, shows the analyst the final results.

Anthropic also highlighted that a human-in-the-loop (HITL) approach drives the system's decision-making. According to Anthropic, "Claude also provides a confidence rating for each finding because these issues often involve nuances that are difficult to assess from source code alone."

"Nothing is implemented without human approval: developers always make the final decision, even though Claude Code Security finds issues and offers fixes."

.webp%3Fw%3D1068%26resize%3D1068%2C0%26ssl%3D1&w=3840&q=75)