For years, security teams have been working on identity and access controls for both people and service accounts This article explores security tool conversations. . But a new kind of actor has quietly made its way into most business settings, and it works completely outside of those controls.

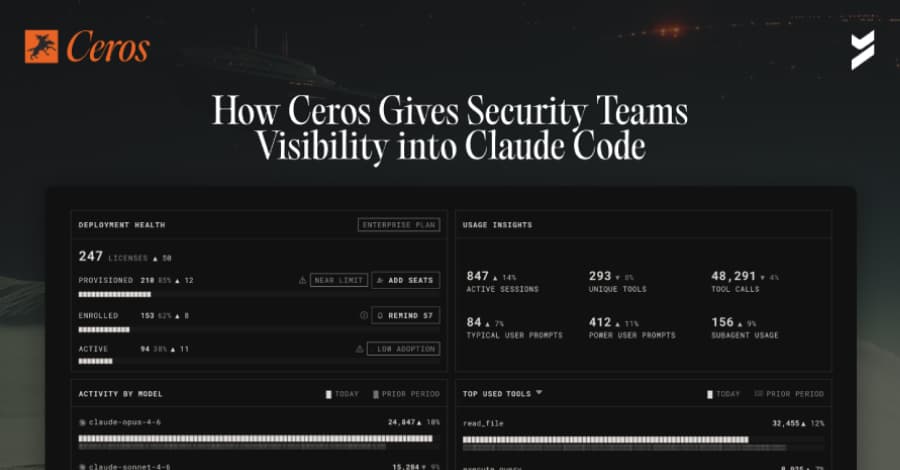

Claude Code, Anthropic's AI coding agent, is now being used on a large scale by engineering companies. It can read files, run shell commands, call external APIs, and connect to third-party integrations known as MCP servers. It does all of this on its own, with the developer's full permission, on the developer's local machine, before any network-layer security tool can see it. The Conversations view lists all of the sessions between a developer and Claude Code on all enrolled devices, sorted by user, device, and time.

You can see the whole conversation between the developer and the agent by clicking on any of them. But you can also see something else between the prompts and responses: tool calls. The LLM doesn't just know the answer when a developer asks Claude Code something as simple as "what files are in my directory?"

It tells the agent to run a tool on the local machine, which in this case is bash ls -la. Ceros checks the posture of the device over and over again during the session, not just when you log in. Ceros sees that endpoint protection is off when Claude Code is running and takes action based on policy. The Activity Log: Proof That You Can Audit The Activity Log is where compliance teams can see how Ceros is directly related to their work.

Every entry is not just a record; it is a forensic snapshot of the environment at the exact time that Claude Code was called. A single log entry shows the device's full security posture at that time, the complete process ancestry showing every process in the chain that invoked Claude Code, binary signatures of every executable in that ancestry, the OS-level user identity tied to a verified human, and every action Claude Code took during the session.