A flaw in OpenAI ChatGPT that had never been found before let sensitive conversation data be stolen without the user's knowledge or permission This article explores flaw openai chatgpt. . There is no proof that the problem was ever used for bad purposes.

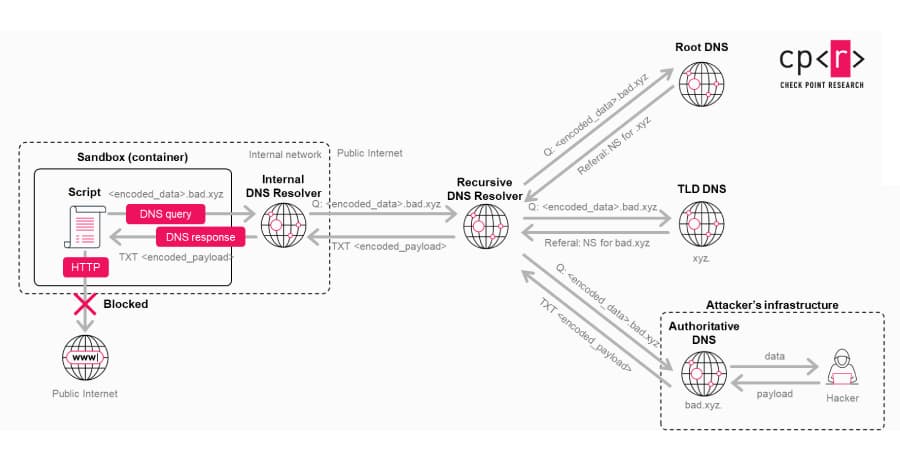

Threat actors have been seen putting out web browser extensions that use the shady technique of "prompt poaching" to secretly steal AI chatbot conversations without the user's permission. Check Point said, "As AI platforms become full computing environments that handle our most sensitive data, native security controls are no longer enough on their own." BeyondTrust says that the problem comes from not properly cleaning up the input when processing GitHub branch names while running tasks in the cloud.

OpenAI found the flaw on February 20, 2026, and fixed it on February 21, 2027. The threat grows when the technique is built into custom GPTs because the bad code could be built in instead of tricking a user into pasting a specially made prompt. The ChatGPT website, Codex CLI, Codex SDK, and Codex IDE Extension are all vulnerable.

OpenAI fixed it on February 5, 2026, after it was reported on December 16, 2025. The study shows that there is an increasing risk because AI coding agents have special access that can be used to create a "scalable attack path" into enterprise systems without setting off normal security measures.

BeyondTrust said, "As AI agents become more deeply integrated into developer workflows, the security of the containers they run in and the input they consume must be treated with the same rigor as any other application security boundary." The cybersecurity vendor said that the security of these environments needs to keep up. "The attack surface is getting bigger, and... the security in these places needs to keep up with the attack surface," it said.