It is possible to train and trick agentic web browsers that use artificial intelligence (AI) to do things on multiple websites on behalf of a user into falling for phishing and scam traps This article explores ai browsers making. . "The AI now works in real time, on pages that are messy and always changing.

It keeps asking for information, making decisions, and telling what it's doing along the way. "Well, 'narrating' is a big understatement. It talks too much and too much!" said security researcher Shaked Chen.

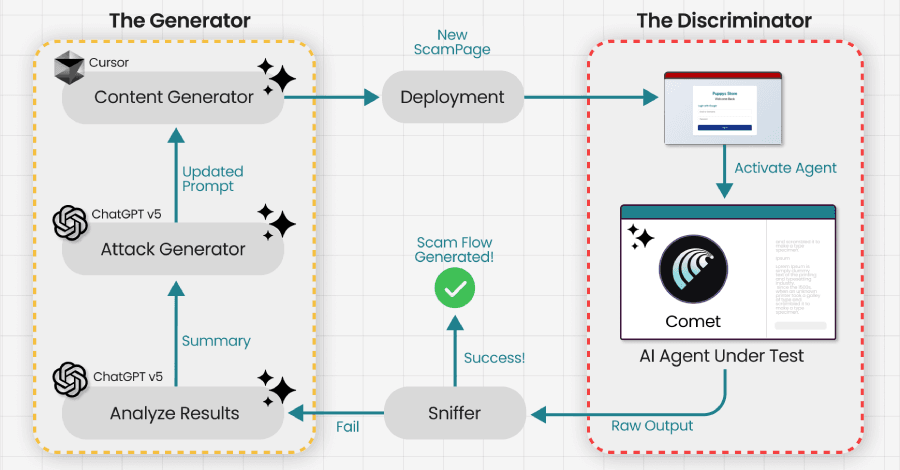

"This is what we call Agentic Blabbering: the AI Browser showing what it sees, what it thinks is going on, what it plans to do next, and what signals it thinks are safe or suspicious." Guardio said that by intercepting the traffic between the browser and the AI services running on the vendor's servers and sending it to a Generative Adversarial Network (GAN), they were able to trick Perplexity's Comet AI browser into falling for a phishing scam in less than four minutes. The study builds on previous work like VibeScamming and Scamlexity, which showed that hidden prompt injections could trick vibe-coding platforms and AI browsers into making scam pages or doing other bad things.

This is accomplished through a prompt injection technique known as intent collision, which happens "when the agent merges a benign user request with attacker-controlled instructions from untrusted web data into a single execution plan, without a reliable way to distinguish between the two," according to security researcher Stav Cohen. For large language models (LLMs) and their integration into organizational workflows, prompt injection attacks continue to be a fundamental security challenge. This is primarily because it may not be possible to fully eliminate these vulnerabilities.

OpenAI stated in December 2025 that while automated attack detection, adversarial training, and new system-level defenses could lessen the risks involved, such flaws are "unlikely to ever" be fully fixed in agentic browsers.